Unix Pipes Under Load: Streaming, Barriers, Backpressure, and Bottlenecks

1. The Unix pipeline

Every Unix user knows the pipe operator. Typing ls | wc -l, and two independent programs exchange data as if they were designed together. That simplicity reflects a deliberate design philosophy that Doug McIlroy articulated at Bell Labs in the 1970s: write programs that do one thing well, and let them communicate through a universal interface to combine them into complex workflows.

Essentially, a Unix pipe allows one program to send its output directly into another program’s input without saving a temporary file to disk. It turns two independent tools into a single data pipeline.

What is less obvious is why pipes work so well for large data. A pipeline processing a multi-gigabyte log file uses roughly the same memory as one processing a kilobyte. The data flows through, it does not accumulate. This streaming behavior is the pipe's defining characteristic — and also its most misunderstood one.

This article examines pipes from that angle: not as a convenience feature, but as a memory-efficient streaming primitive. We will look at how they work, where they excel, and where they quietly fail.

2. The McIlroy story

In 1986, Donald Knuth was asked to write a program solving a simple problem: read a file, find the most frequently used words, and print the top results. He produced a literate programming masterpiece — a carefully crafted Pascal program, several pages long, with a custom data structure optimized for the task.

Doug McIlroy reviewed it and responded with six lines of shell:

tr -cs A-Za-z '\n' | tr A-Z a-z | sort | uniq -c | sort -rn | head

The pipeline reads a file, splits it into words, lowercases them, sorts, counts occurrences, sorts by frequency, and prints the top results. It requires no custom data structures, no memory management, no compilation. It also processes files larger than available RAM without modification — each stage handles only what fits in the pipe buffer at any moment.

McIlroy's point was not that shell pipelines are always better than carefully written programs. It was that composition of simple tools can match or exceed purpose-built solutions, at a fraction of the complexity. The memory efficiency was not a design goal — it was a consequence of how pipes work.

3. How pipes work

When you type ls | wc -l, the shell creates a pipe before either program starts. A pipe is a kernel-managed buffer — on Linux, 64KB by default — with two ends: a write end and a read end. The shell connects ls stdout to the write end, and wc stdin to the read end, using a system call called dup2. Neither program knows or cares about the pipe — ls writes to what it thinks is standard output, wc reads from what it thinks is standard input.

Behind the scenes, the shell uses three system calls to wire this together. First, pipe() creates the buffer and returns two file descriptors — one for reading, one for writing. Then fork() creates two child processes, both inheriting those file descriptors. Finally, dup2() redirects stdout in the first child to the pipe's write end, and stdin in the second child to the pipe's read end. The original pipe file descriptors are then closed, and each child calls exec() to become ls and wc respectively. From that point, the two programs run independently, connected only through the kernel buffer.

Both processes start concurrently. When ls fills the 64KB buffer, the kernel blocks it until wc reads some data and makes room. When the buffer is empty and ls hasn't finished writing, wc blocks and waits. This backpressure mechanism is why pipelines are memory-efficient: at most 64KB of data exists in the pipe at any moment, regardless of how large the input is. This holds true for streaming stages — programs like grep, awk, or sed that process input line by line. Barrier stages like sort are a different matter: they must read all input into their own memory before producing any output, making their memory usage proportional to input size regardless of the pipe buffer.

This is also why pipelines are not parallel in any meaningful sense. The processes take turns. A fast producer is throttled by a slow consumer, and a slow producer starves a fast consumer. The 64KB buffer smooths out brief mismatches, but it does not change the fundamental constraint: the slowest stage becomes a bottleneck that limits total throughput.

4. Streaming vs barrier stage

The backpressure mechanism described in section 3 creates an important distinction between two fundamentally different kinds of pipeline stages. Understanding this distinction is the key to reasoning about pipeline performance and memory usage.

A streaming stage processes input incrementally and produces output as it goes. grep reads a line, tests it, emits it or discards it, moves to the next. tr, cut, sed are all streamers. Their memory footprint is constant regardless of input size, and they never block the pipeline longer than it takes to process one record.

A barrier stage must consume its entire input before producing any output. sort is the canonical example — you cannot emit the smallest element until you have seen all elements. tac reverses a file, so it must read everything before writing anything. uniq -c as typically used follows a sort, so by the time it runs the damage is already done. These stages break the streaming contract: memory grows with input size, and everything downstream waits.

Consider the classic word frequency pipeline from section 2: tr streams instantly. The first sort then consumes everything — gigabytes if necessary — before uniq -c sees a single line. uniq -c streams quickly over the sorted output, then the second sort again consumes everything before head gets its ten lines. Two full barriers, three sequential phases. The pipeline is not six concurrent processes — it is three sequential batches connected by two streaming bridges.

You can observe this directly. Run /usr/bin/time -v on the full pipeline versus the first sort alone on the same input:

/usr/bin/time -v sh -c 'tr -cs A-Za-z "\n" < example.txt | tr A-Z a-z | sort | uniq -c | sort -rn | head'

/usr/bin/time -v sh -c 'tr -cs A-Za-z "\n" < example.txt | tr A-Z a-z | sort > /dev/null'

E.g. using The Complete Works of William Shakespeare as an example I measured 0.32 sec vs 0.29 sec, with practically the same 12.1 MB peak memory for both cases.

It indicates that the first sort dominates memory consumption for the entire pipeline. Everything before it is essentially free, and everything after operates on a fraction of the original data.

This has a practical implication: when optimizing a pipeline, identifying and addressing the first barrier is almost always the highest-leverage intervention. Stages before the barrier run for free in terms of memory. Stages after it operate on reduced data. The barrier itself is where the cost lives.

5. Where pipelines work well

The most common real-world pipeline is probably log analysis.

a. Finding the most frequent error messages in a log file looks like this:

grep "ERROR" app.log | sort | uniq -c | sort -rn

grep streams through the file, emitting only matching lines — constant memory, no barrier. Then sort accumulates everything into memory before emitting a single line. uniq -c streams over the sorted output counting consecutive duplicates. The second sort -rn accumulates again to rank by frequency.

Two barriers in four stages. On a large log file, peak memory is determined entirely by how many ERROR lines exist — not by the file size, but not constant either.

b. Another common pattern is searching for a string across many files:

find . -name "*.log" | xargs grep "ERROR"

find streams filenames one by one into xargs, which batches them into grep invocations. No stage accumulates data — memory stays constant regardless of how many files exist or how large they are.

This is pipelines doing what they do best: composing simple tools into a workflow that scales to arbitrary input size without modification.

6. Where pipes fall short

Pipelines excel at linear transformations of a single data stream — filtering, counting, reformatting. But not every problem fits that shape. Three categories of problems expose their limits clearly.

Complex state. Pipelines are stateless between stages. Each stage sees only its own input stream, with no knowledge of what other stages have seen or produced. If your processing requires correlating events across records, tracking sequences, or maintaining context that spans multiple passes over the data, you are working against the model. At that point you are no longer composing a pipeline — you are writing a program, and a proper scripting language or tool will serve you better than forcing the logic into shell.

Heavy parallelism. As established in section 3, pipeline stages take turns rather than run truly concurrently. If your workload is CPU-bound and the data can be partitioned, a pipeline will leave cores idle. This is a consequence of the sequential streaming model. The pipe was never designed for parallel computation.

Joins. Combining two datasets by a common key — the most basic operation in data processing — has no clean pipeline expression. You can approximate it with sort and join, but this requires both inputs to be sorted first, adding two barriers before the actual work begins. For anything beyond trivial cases, a pipeline join is awkward, brittle, and slow compared to a proper tool.

7. Beyond pipelines

When a pipeline reaches its limits, two directions are worth considering depending on the problem. If the bottleneck is a barrier stage, the pipeline itself can often be restructured. If the bottleneck is parallelism, the pipeline needs external orchestration.

7.1. AWK

AWK can replace entire pipelines in a single pass. It operates in two modes depending on the problem: purely streaming, processing each record and emitting output immediately, or stateful, accumulating data across records using associative arrays and producing output at the end. Most pipeline tools are one or the other — grep and sed stream, sort accumulates. AWK can do both, which makes it effective at eliminating the sort-based barriers that dominate most text processing pipelines.

The most common barrier in a pipeline is sort used purely to prepare input for uniq or uniq -c. AWK's associative arrays make both redundant.

Deduplication without sort:

sort file | uniq

Using AWK:

awk '!x[$0]++' file

A single streaming pass. Each line is checked against an associative array — seen lines are discarded, unseen lines pass through. The tradeoff is explicit: output order is insertion order, not sorted order. When sorted output is not required, the barrier is gone entirely.

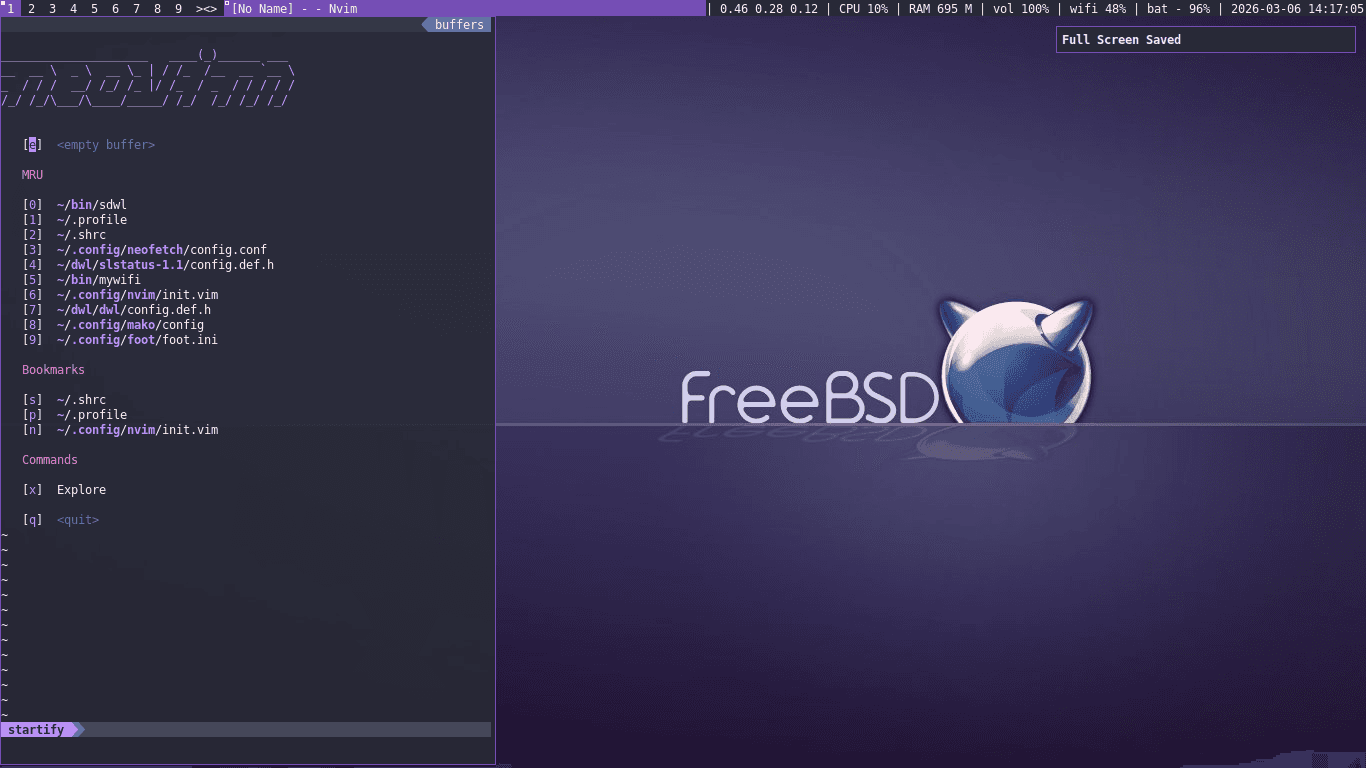

As efficient it looks, resource footprints are a different questions. Running a quick benchmarking using the same benchmark runner and csv file as in my Practical AWK Benchmarking article, the results are as follows:

--- Statistical Summary ---

cmd Runtime [s] Peak Memory [MB]

mean ± sdev min median max Jtr% mean ± sdev min median max Jtr%

gawk 2.5438 ± 0.0251 2.5167 2.5488 2.5661 0.2 594.21 ± 0.25 593.96 594.20 594.46 0.0

mawk 2.5876 ± 0.0035 2.5842 2.5877 2.5911 0.0 291.24 ± 0.57 290.89 290.93 291.89 0.1

nawk 2.5799 ± 0.0124 2.5726 2.5730 2.5942 0.3 322.17 ± 0.17 322.03 322.12 322.35 0.0

s|u 2.4596 ± 0.0048 2.4556 2.4585 2.4649 0.0 368.64 ± 0.07 368.57 368.62 368.71 0.0

--- Normalized Benchmarks ---

cmd RT PM d F

gawk 1.04 2.04 1.04 2.12

mawk 1.05 1.00 0.05 1.05

nawk 1.05 1.11 0.12 1.16

s|u 1.00 1.27 0.27 1.27

Definitions - RT: Normalized Runtime; PM: Normalized Peak Memory; d: Euclidean Distance; F: Resource Footprint. (Calculations are based on 1 warmup and 3 test runs.)

Runtime is nearly identical across all variants — AWK offers no speed advantage over sort | uniq here. The real difference is memory. mawk uses 21% less memory than sort | uniq and has the best overall resource footprint (F=1.05), making it the clear winner. nawk is a reasonable middle ground at F=1.16. gawk, despite being the most widely used AWK variant, performs worst — consuming double the memory of mawk and 61% more than sort | uniq, reflected in a footprint of F=2.12. The choice of AWK implementation matters as much as the algorithmic change — the wrong one erases any benefit.

7.2 Real parallelism with xargs and GNU parallel

When the bottleneck is not a barrier but throughput — processing many independent inputs simultaneously — pipelines need external orchestration. xargs -P and GNU parallel both achieve this by partitioning work across multiple processes.

The find | xargs grep example from section 5 becomes parallel with one addition:

find . -name "*.log" | xargs -P $(nproc) grep "ERROR"

-P $(nproc) runs one grep process per available core. The pipeline structure remains intact — find still streams filenames, xargs still batches them — but the processing stage now uses all cores.

GNU parallel offers finer control:

find . -name "*.log" | parallel grep "ERROR"

By default it spawns one job per core. It also preserves output order, handles errors per job, and supports more complex partitioning strategies than xargs.

The key distinction from pipeline concurrency is explicit: you are not getting parallelism from the pipe itself. You are orchestrating multiple independent processes from outside the pipeline. The pipe remains a sequential channel — what changes is how many workers consume from it simultaneously.

8. Conclusion

Pipes are not primarily a performance tool. They are a composition tool — a way to connect programs that were never designed to work together, avoiding intermediate files and keeping memory usage flat regardless of input size. That property is powerful, and it is why pipelines designed in the 1970s can still process gigabytes of data efficiently today.

The limits are just as real. A pipeline is a single stream, moving in one direction, through stages that take turns. When your problem needs multiple streams, parallel execution, or global state, the model stops helping and starts getting in the way. Knowing where that boundary lies — and reaching for the right tool when you cross it — is what makes pipelines useful rather than limiting.