Practical AWK Benchmarking

Performance Analysis of gawk, mawk, and nawk Using Pareto Frontier

"I think in terms of programming languages you get the most bang for your buck by learning AWK",

said Brian Kernighan, the K in AWK (Lex Fridman podcast #109). AWK was created in Bell Labs in 1977, and its name is derived from the surnames of its authors: Alfred Aho, Peter Weinberger, and Brian Kernighan. AWK is still widely used today, as a core tool it is available on any Unix or Unix-like system (Linux, BSDs, macOS etc.). Its relevance extends to modern data pipelines, where AWK can be applied as an effective, schema-agnostic pre-processor.

Why Benchmark AWK

AWK is a concise, domain-specific programming language designed for efficient text processing via its pattern–action execution model. Its core strength lies in its implicit record- and field-level iteration: input is processed sequentially, one record at a time, with automatic field decomposition applied to each record. This design removes the need for explicit control flow for input traversal, allowing programs to express what transformation should occur rather than how to iterate. By abstracting record traversal, field iteration, and memory management, AWK allows concise expressions of C-like logic that are executed immediately when a pattern matches, making it exceptionally effective for rapid, ad-hoc data analysis and transformation.

While the AWK language is a standard, it exists in several distinct implementations, most notably:

gawk (GNU Awk): The feature-rich version maintained by Arnold Robbins. Default in Arch Linux, RHEL, Fedora.

mawk (Mike Brennan’s Awk): A speed-oriented implementation using a bytecode interpreter, currently maintained by Thomas Dickey. Default in Debian and many of its derivatives.

nawk (The "One True Awk"): The original implementation from the language’s creators, maintained by Brian Kernighan. Default in BSDs and macOS.

In most Linux distributions, the awk command is a symbolic link to a specific implementation. You can verify which variant is being used with: ls -l $(which awk).

This article benchmarks gawk, mawk, and nawk by evaluating both execution time and memory footprint through a Pareto Frontier analysis to determine their true resource efficiency. The primary catalyst for this comparison is Brian Kernighan’s 2025 update to nawk, which introduced CSV and UTF-8 support.

Benchmarking Approach

The benchmarks utilize functional one-liners that perform logical data analysis tasks relevant to the dataset. Rather than relying on synthetic loops or isolated instructions, these benchmarks are designed to reflect idiomatic AWK usage. This approach evaluates engine performance across various internal operations, including:

Data aggregation: Extensive use of associative arrays.

Control flow: Implementation of conditional logic and loops.

Text processing: Pattern matching and string manipulation through regex and built-in functions.

Arithmetic: Processing numeric fields for financial calculations.

Methodology

To evaluate the performance of the three AWK implementations, the benchmarking focused on two critical metrics: runtime and peak memory usage. Resource tracking was performed using cgmemtime, an ideal tool for this purpose as it captures peak group memory consumption, including any spawned child processes. The benchmarking process was automated via benchgab.awk, my custom benchmark runner that handles warmups and multiple test runs. The script is built on top of cgmemtime and implemented in AWK —a deliberately fitting and self-referential choice for this study.

The workload consisted of a 179 MB CSV dataset containing 1.5 million lines and 14 fields. The chosen dataset ensures that commas only appear as field delimiters, allowing for a comparison across all three engines using the standard -F, flag, as mawk lacks -- csv support. The fields are structured as follows:

- Region, 2. Country, 3. Item Type, 4. Sales Channel, 5. Order Priority, 6. Order Date, 7. Order ID, 8. Ship Date, 9. Units Sold, 10. Unit Price, 11.Unit Cost, 12. Total Revenue, 13. Total Cost, 14. Total Profit.

Each benchmark sequence included one initial warmup followed by ten recorded runs, with the mean and standard deviation derived from this ten-run sample.

The following table provides a summary of the specific versions and main characteristics of the three AWK implementations tested:

| Name | Version | Binary Size | Installed Size | CSV | UTF-8 | Extensions |

|--------|----------------|-------------|----------------|-------|-------|------------|

| gawk | 5.3.2 | 853 kB | 3.60 MB | yes | yes | yes |

| mawk | 1.3.4 20250131 | 179 kB | 206 kB | no | no | no |

| nawk | 20251225 | 139 kB | 145 kB | yes | yes | no |

Benchmarks were conducted on an Arch Linux workstation powered by a Ryzen 5900x CPU, using the Alacritty terminal within a dwm session.

Benchmarks

Understanding the Results

Each benchmark includes a result table. The metrics are defined as follows:

Runtime: The average execution time [s] followed by the standard deviation (±σ).

Peak Mem: The average peak group memory [MB] followed by the standard deviation (±σ).

RT: Normalized average runtime. The execution time relative to the fastest implementation (1.0 is the baseline).

PM: Normalized average group peak memory. The peak memory relative to the implementation with the lowest memory footprint (1.0 is the baseline).

#1 Benchmark: duplicate lines

Objective: Identify and print the total number of duplicate lines within the dataset.

Targeted operations: Associative arrays.

awk -F, 'x[$0]++ { i++ } END { print i }'

Output: 108603

| #1 | Runtime [s] | Peak Mem [MB] | RT | PM |

|------|---------------|---------------|------|------|

| gawk | 1.395 ± 0.044 | 551.16 ± 0.47 | 1.12 | 1.98 |

| mawk | 1.241 ± 0.030 | 290.59 ± 0.17 | 1.00 | 1.04 |

| nawk | 1.267 ± 0.007 | 278.90 ± 0.21 | 1.02 | 1.00 |

#2 Benchmark: most units sold by country

Objective: Find the country with the highest total units sold, excluding duplicate entries.

Targeted Operations: multi-array processing and max-value search

awk -F, 'NR > 1 && !x[$0]++ { u[$2] += $9 } END { for (i in u) if (u[i] > u_max) { u_max = u[i]; c = i } print c, u_max }'

| #2 | Runtime [s] | Peak Mem [MB] | RT | PM |

|------|---------------|---------------|------|------|

| gawk | 2.717 ± 0.018 | 551.12 ± 0.31 | 1.62 | 1.98 |

| mawk | 1.678 ± 0.037 | 290.71 ± 0.28 | 1.00 | 1.04 |

| nawk | 2.175 ± 0.007 | 278.84 ± 0.22 | 1.30 | 1.00 |

#3 Benchmark: highest profit margin

Objective: Identify the order ID with the greatest ratio of profit to unit price.

Targeted operations: Floating-point arithmetic and conditional max-value tracking.

awk -F, 'NR > 1 { pm = ($10 - $11) / $10; if (pm > pm_max) { pm_max = pm; id = $7 }} END { print id }'

Output: 667593514

| #3 | Runtime [s] | Peak Mem [MB] | RT | PM |

|------|---------------|---------------|------|------|

| gawk | 1.783 ± 0.006 | 0.74 ± 0.01 | 3.02 | 2.69 |

| mawk | 0.591 ± 0.005 | 0.62 ± 0.13 | 1.00 | 2.25 |

| nawk | 1.340 ± 0.005 | 0.28 ± 0.08 | 2.27 | 1.00 |

#4 Benchmark: count European countries

Objective: Count unique country names within the Europe region using exact string matching.

Targeted operations: Exact string matching and associative array lookups.

awk -F, '$1 == "Europe" { eu[$2]++ } END { for (country in eu) n++; print n }'

Output: 48

| #4 | Runtime [s] | Peak Mem [MB] | RT | PM |

|------|---------------|---------------|------|------|

| gawk | 0.513 ± 0.005 | 0.74 ± 0.00 | 1.46 | 1.89 |

| mawk | 0.351 ± 0.004 | 0.63 ± 0.12 | 1.00 | 1.62 |

| nawk | 1.284 ± 0.006 | 0.39 ± 0.12 | 3.66 | 1.00 |

#5 Benchmark: count European countries (regex)

Objective: Count unique country names within the Europe region using regex matching**.**

Targeted operations: Regex matching and associative array lookups.

awk -F, '$1 ~ /Europe/ { eu[$2]++ } END { for (country in eu) n++; print n }'

Output: 48

| #5 | Runtime [s] | Peak Mem [MB] | RT | PM |

|------|----------------|---------------|------|------|

| gawk | 0.524 ± 0.017 | 0.76 ± 0.14 | 1.49 | 1.42 |

| mawk | 0.351 ± 0.006 | 0.67 ± 0.11 | 1.00 | 1.24 |

| nawk | 1.420 ± 0.007 | 0.54 ± 0.11 | 4.04 | 1.00 |

#6 Benchmark: number of orders in date range

Objective: Count number of orders (excluding duplicates) between 3/1/2014 and 3/31/2015.

Targeted operations: String manipulation functions, relational string comparisons, and associative array deduplication.

awk -F, 'NR > 1 && !x[$0]++ { split($6, a, "/"); d = sprintf("%d%02d%02d", a[3], a[1], a[2]); if (d >= "20140301" && d <= "20150331") n++ } END { print n }'

Output: 203060

| #6 | Runtime [s] | Peak Mem [MB] | RT | PM |

|------|---------------|---------------|------|------|

| gawk | 4.106 ± 0.043 | 551.14 ± 0.21 | 1.95 | 1.98 |

| mawk | 2.107 ± 0.016 | 290.76 ± 0.27 | 1.00 | 1.04 |

| nawk | 3.434 ± 0.019 | 278.87 ± 0.26 | 1.63 | 1.00 |

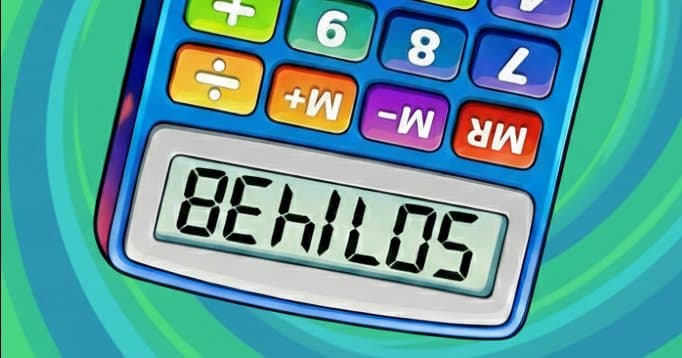

Results

Geometric Mean and Normalization

To provide a representative comparison across multiple benchmarks, the Geometric Mean for the normalized RT and PM values was calculated. The geometric mean is the mathematically appropriate choice for averaging ratios or normalized values, as it ensures that relative improvements are weighted consistently across all tests. Unlike the arithmetic mean, this approach prevents outliers in absolute execution time from disproportionately skewing the aggregate performance profile.

Evaluation Metrics

To synthesize these normalized results into a single actionable score, I have applied two evaluation metrics:

Euclidean Distance (d): Measures the geometric distance from the "Ideal Point" (1,1). A lower d indicates a more balanced implementation that is close to being the best in both speed and memory simultaneously.

Resource Footprint (F): Calculated as RT×PM. This represents the total resource footprint; lower values indicate a more efficient use of system resources to complete the same task.

Summary Table

The following table summarizes the overall performance of the three AWK engines based on the geometric mean of all normalized benchmarks:

| Summary | RT | PM | d | F |

|---------|------|------|------|------|

| gawk | 1.80 | 1.96 | 1.25 | 3.51 |

| mawk | 1.00 | 1.31 | 0.31 | 1.31 |

| nawk | 2.13 | 1.00 | 1.13 | 2.13 |

Definitions - RT: Normalized Runtime; PM: Normalized Peak Memory; d: Euclidean Distance; F: Resource Footprint

Discussion

The benchmarking results across six diverse objectives show a clear and consistent performance profile for each implementation. Across all six benchmarks, mawk was consistently the fastest, while nawk maintained the lowest memory footprint. Conversely, gawk exhibited the highest memory usage in every benchmark. However, gawk demonstrates higher relative speed consistency than nawk; even when finishing second or third, it generally avoids the significant performance collapses seen by nawk. While nawk is fast at mathematical logic and simple field processing, it is significantly slower at regex and string operations, and complex array management.

These individual performance patterns serve as the foundation for my aggregate metrics, where the trade-off between speed and memory is formally quantified.

While the Euclidean distance (d) provides a useful preliminary indication of effectiveness, relying on it alone can be misleading. For instance, the Euclidean Distances for gawk (1.25) and nawk (1.13) are relatively close, yet their Resource Footprints (F) reveal a significant disparity: gawk consumes nearly 65% more total resources.

This limitation necessitates a more robust analysis via the Pareto frontier.

To visualize the trade-offs, I plotted the normalized values on a 2D coordinate system where the x-axis represents the normalized runtime (RT) and the y-axis represents normalized peak memory (PM). The "Ideal Point" is located at (1,1), representing an implementation that is simultaneously the fastest and the most memory-efficient.

Graph: The Pareto Frontier of AWK implementations: Visualizing the optimal equilibrium between execution speed and memory footprint.

The Pareto frontier represents the boundary of "non-dominated" solutions—implementations where you cannot improve one metric (like speed) without degrading another (like memory). In this study, mawk and nawk define the frontier: mawk is the choice for raw speed, while nawk is the choice for minimal footprint. gawk, however, is positioned away from this boundary; because it is slower than mawk and uses more memory than nawk, it is considered "dominated" and sub-optimal in terms of raw resource efficiency.

Conclusion

The data confirms that the "best" AWK implementation is a calculated trade-off between throughput and resource overhead. Within the Unix philosophy of choosing the right tool for the job, each engine serves a distinct operational profile.

mawk is the powerhouse for high-volume data. If your primary bottleneck is execution speed, its bytecode engine is unrivaled. It consistently defines the leading edge of the Pareto frontier, delivering the highest performance-to-resource ratio.

nawk is the go-to for minimalist environments. While it prioritizes simplicity over the heavy lifting of complex regex or string manipulation, its memory footprint is remarkably small and predictable. It is the definitive choice for systems where memory overhead is a strictly limited resource.

gawk offers a more nuanced value proposition. While it is mathematically dominated by its rivals, that overhead pays for a much broader feature set which can outweigh its increased resource consumption.

Across various workflows—from data science pipelines to system automation—mawk provides the highest performance return for most standard tasks. Ultimately, these results show that the choice of engine should be a deliberate decision: use mawk for speed, nawk for a light footprint, and gawk when you need its extended toolkit.