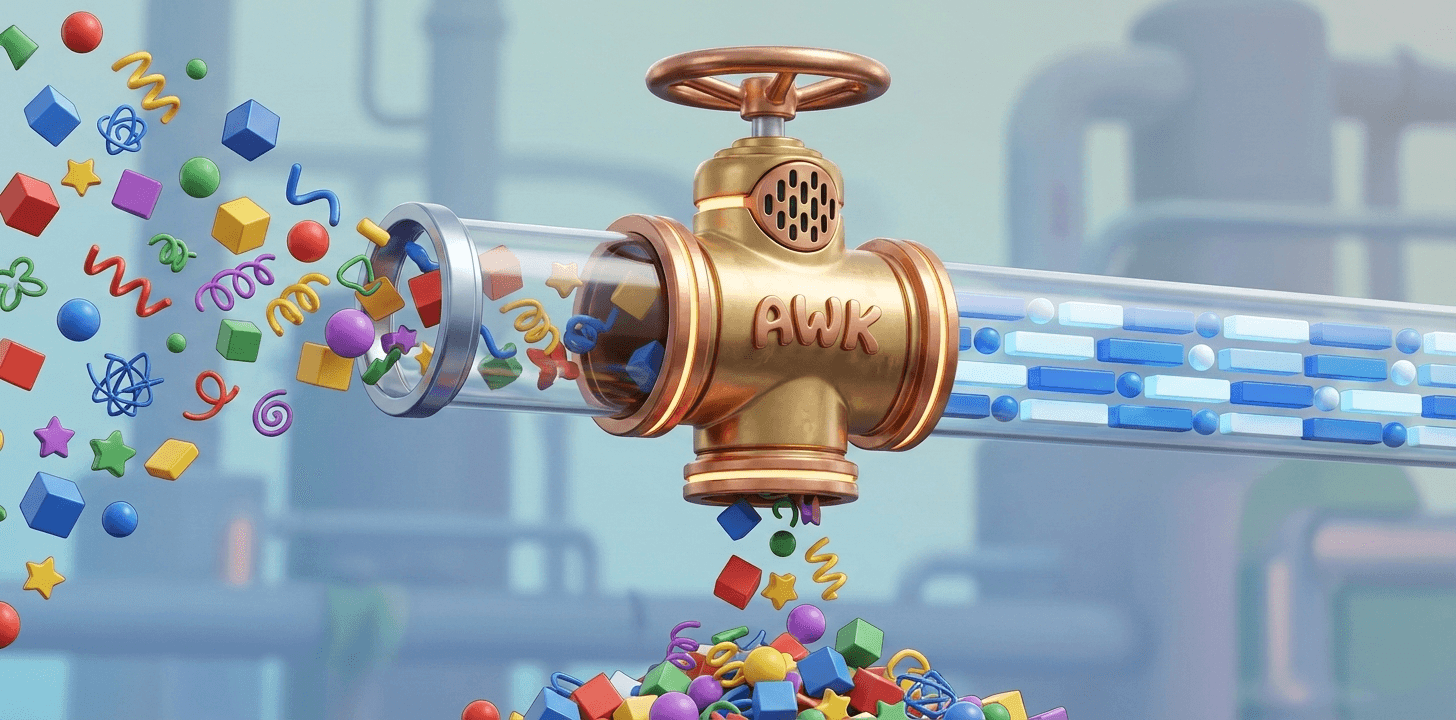

AWK: the Zero-Setup Pre-Processor

A Phase-2 Sentinel for Modern Data Pipelines

Modern data pipelines most often fail at their beginning, not their end. A malformed record, an unexpected delimiter, or an encoding anomaly can cause otherwise robust processing engines to abort after consuming significant computational resources. These failures are not rare edge cases; they are a predictable consequence of feeding untrusted, heterogeneous input into systems that implicitly assume structural coherence.

Preventing such aborts requires a tool that operates before schema, before types, and before semantic assumptions are applied: schema-agnostic, streaming, low in resource footprint, and available everywhere data flows. It must tolerate mixed record structures, inconsistent delimiters, and partial corruption without imposing premature interpretation. Such a tool already exists—and has existed since the 1970s. Its name is AWK.

The Contemporary Data Pipeline Model

Modern data systems are commonly described using a five-phase pipeline:

Phase 1. Ingest – raw data arrival from external sources

Phase 2. Validate – quality checks and correctness guarantees

Phase 3. Transform – schema enforcement, normalization, columnar operations

Phase 4. Analyze – analytics, feature engineering, ML preparation

Phase 5. Consume – BI, reporting, downstream products

This model is widely recognized across data engineering practice, even if the terminology varies slightly between platforms and tools. Crucially, validation is now treated as a first-class concern: data contracts, expectations, and quality gates are standard components of modern stacks.

In practice, however, validation is often implemented primarily as a semantic operation—type checks, nullability constraints, and value ranges—implicitly presuming that incoming data already satisfies basic structural requirements.

Structural Validation Comes Before Semantic Validation

Validation can be divided into two fundamentally different layers:

Structural (geometric) validation: Concerned with physical integrity: record boundaries, delimiter consistency, field counts, encoding correctness, and basic layout.

Semantic validation: Concerned with meaning: data types, ranges, domain rules, and business logic.

Semantic validation depends on structural integrity. A columnar engine cannot validate a date column if unescaped delimiters have shifted field boundaries. A schema-on-read system cannot enforce types if records are misaligned or partially corrupted. The pipeline fails before semantics can even be evaluated.

A related failure mode appears when pandas is used in Phase 3, where schema enforcement and large-scale transformation are expected. Pandas is architecturally aligned with Phase 4 workloads, and applying it earlier on large datasets can lead to memory exhaustion, just as applying Polars or DuckDB in Phase 2—before structural validation—leads to structural parse failures.

This highlights the absence of an explicit Phase-2 structural validation layer in many modern data pipelines.

Phase 2, Explicitly: Structural Validation

Within the standard ingest → validate → transform model, structural validation is the earliest and most failure-prone part of Phase 2. Its purpose is not to interpret data, but to determine whether the data is fit to be interpreted at all.

A tool operating at this layer must satisfy specific constraints:

operate on untrusted, possibly malformed input

process data as a stream, with constant memory usage

make minimal assumptions about structure

integrate cleanly into automated pipelines

fail fast and produce actionable diagnostics

This is where AWK belongs.

AWK’s Role in Phase 2

AWK is not an analytics tool, a transformation engine, or a schema system. Its strength lies earlier — inside Phase 2, before schema and semantics are applied.

Architecturally, AWK functions as a pre-schema validation sentinel.

It processes text streams sequentially, with memory usage independent of input size. A multi-hundred-gigabyte file can be inspected using the same resources as a kilobyte-scale sample. This property alone makes AWK suitable for structural inspection of datasets that exceed available memory.

Its footprint is minimal—on the order of hundreds of kilobytes—and its availability is effectively universal. Every Unix-derived system, including Linux distributions, macOS, BSD variants, container base images, and CI runners, provides AWK by default. No environment setup, dependency resolution, or runtime configuration is required.

For a tool whose purpose is to guard the entrance to a pipeline, this matters. Phase-2 components should be reliable, predictable, and easy to deploy everywhere.

Why Textual Validation Matters

Real-world data is rarely homogeneous. Files often contain:

headers and footers with different formats

multiple delimiter conventions within a single stream

varying field counts by record type

embedded structured blocks inside free-form text

multi-line records such as logs or stack traces

Specialized validators and parsers typically assume consistency. When that assumption fails, they abort. AWK does not impose such constraints. It operates on patterns, not schemas, allowing validation logic to adapt dynamically to what the data actually contains.

This does not mean that AWK magically “fixes” broken data. It means that AWK can observe, classify, and assert structural properties before downstream tools are engaged. It can count anomalies, flag record classes, detect shifts in layout, and isolate segments that would cause rigid parsers to fail.

This textual, pattern-first perspective is precisely what is required at the earliest stage of validation.

Comparison with Specialized Streaming Tools

A number of contemporary command-line tools address aspects of streaming data inspection and manipulation. Utilities such as Miller, csvkit, xsv, qsv, xan, and related programs are widely used for high-performance processing of delimited data, particularly CSV. They excel when input conforms to a recognizable tabular structure and when field boundaries, quoting rules, and record layouts are already well defined.

These tools are optimized for structured streams: they provide fast parsing, expressive transformations, and strong guarantees once basic format assumptions are met. In that role, they are highly effective components of modern data pipelines.

Their limitation, in the context of early validation, is not capability but scope. They presuppose that structural coherence already exists. When confronted with inconsistent field counts, shifting delimiters, malformed records, or mixed-format sections, they typically fail early or require pre-cleaned input.

AWK occupies a different position. It does not assume a stable schema or even a stable record shape. By operating on text and patterns rather than fixed structures, it can observe, classify, and assert properties of a stream before any format-specific interpretation is imposed. This makes it suitable for the earliest stage of validation, where the primary question is not how to transform the data, but whether the data can be safely interpreted at all.

The Data Assertion Pattern

Robust pipelines treat validation as a gate, not a side effect. A practical way to implement this is through data assertions: small, focused validation programs that return explicit success or failure signals.

These assertions execute immediately after ingestion and before any expensive processing begins. If a structural invariant is violated—unexpected field counts, malformed records, encoding issues—the pipeline fails fast, with diagnostics that point directly to the source of the problem.

AWK is well-suited to this pattern. Its exit codes integrate naturally with shell pipelines and workflow orchestrators. Its diagnostics can include precise line numbers, pattern matches, and anomaly counts. And its simplicity reduces the operational risk of the validation layer itself.

Unix Composition and Streaming Architecture

AWK operates within the Unix toolchain and composes naturally with core utilities such as grep, sed, sort, uniq, and cut through pipes and standard streams. At the same time, it is fundamentally different from these tools. AWK is a complete programming language in its own right—Turing complete, stateful, and capable of expressing non-trivial control flow, aggregation, and structural analysis logic.

This dual nature is central to its role in data pipelines. AWK participates in Unix composition like a classic streaming filter, yet it can encapsulate validation logic that would otherwise require custom programs or heavier runtimes. Pattern matching, conditional execution, state carried across records, and multi-line context can all be handled within a single streaming pass, without abandoning the simplicity of standard input and output.

Composition remains a strength rather than a constraint. AWK can act as a thin structural probe between other tools, or as a self-contained validation stage that replaces entire chains of simpler utilities. In both cases, execution remains streaming, memory usage remains bounded, and behavior remains transparent and inspectable.

AWK does not compete with modern data tools. It complements them by ensuring that the assumptions they rely on are actually satisfied.

Conclusion

Modern data pipelines increasingly recognize validation as essential, yet often conflate semantic correctness with structural integrity. In practice, structure must be established before meaning can be enforced. Treating malformed or heterogeneous input as if it were already schema-ready remains a common source of avoidable failure.

AWK occupies a precise and early position inside Phase 2 of the pipeline: structural, pre-schema validation. Its streaming execution model, minimal assumptions, constant memory usage, and universal availability make it well suited to this role. These properties are not historical artifacts but practical advantages when dealing with untrusted data at scale.

AWK’s continued relevance is not a matter of nostalgia, but of architectural fit. It does not replace modern data tools, nor does it compete with them. Instead, it operates where many pipelines remain weakest—at the point where data is first examined, before interpretation begins.

Further articles will explore concrete applications of AWK in modern data workflows and validation scenarios. These discussions continue at AwkLab, where AWK’s role in contemporary data engineering is examined in depth.